|

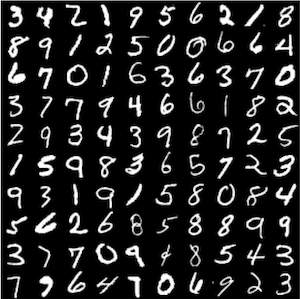

An Example of Using Caffe with MNIST Training Data on Owensīelow is an example batch script ( job.txt) for using Caffe, see this resource for a detailed explanation In particular, Caffe should be run on a GPU-enabled compute node. Refer to Queues and Reservations for Owens, and Scheduling Policies and Limits for more info. 2223-2232, 2017.To configure the Owens cluster for the use of Caffe, use the following commands:īatch jobs can request mutiple nodes/cores and compute time up to the limits of the OSC systems. Zhu, J.Y., Park, T., Isola, P., and Efros, A., Unpaired Image-to-Image Translation Using Cycle-Consistent Adversarial Networks, IEEE Int. Yi, Z., Zhang, H., and Gong, M.L., DualGAN: Unsupervised Dual Learning for Image-to-Image Translation, IEEE Comp. Wilson, A.C., Roelofs, R., Stern, M., and Srebro, N., The Marginal Value of Adaptive Gradient Methods in Machine Learning, ICLR 2018 Conf., Vancouver, Canada, 2018. Sashank, J.R., Satyen, K., and Sanjiv, K., On the Convergence of Adam and Beyond, ICLR 2018 Conf., Vancouver, Canada, 2018. Salimans, T., Goodfellow, I., Zaremba, W., Cheung, V., Radford, A., and Chen, X., Improved Techniques for Training Gans, Adv. Ruder, S., An Overview of Gradient Descent Optimization Algorithms, arXiv preprint arXiv:1609.04747, 2017. on Information Technology, Information Systems and Electrical Engineering (ICITISEE), 2016. Riza, L.S., Nasrulloh, I.F., Junaeti, E., and Zain, R., gradDescentR: An R Package Implementing Gradient Descent and Its Variants for Regression Tasks, 2016 1st Int. Radford, A., Metz, L., and Chintala, S., Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks, arXiv preprint arXiv:1511.06434, 2015. and Field, D.J., Sparse Coding with an Overcomplete Basis Set: A Strategy Employed by V1, Vision Res., vol. on Knowledge Discovery and Data Mining, 2014. Mu, L., Zhang, T., Chen, Y., and Smola, A.J., Efficient Mini-Batch Training for Stochastic Optimization, ACM SIGKDD Int. Mao, X.D., Li, Q., Xie, H.R., Lau, R.Y.K., Wang, Z., and Smolley, S.P., Least Squares Generative Adversarial Networks, IEEE Int. on Neural Information Processing Systems, vol. and Lin, Z.C., Accelerated Proximal Gradient Methods for Nonconvex Programming, NIPS'15: Proc. Lennie, P., The Cost of Cortical Computation, Current Biol., vol. Klambauer, G., Unterthiner, T., Mayr, A., and Hochreiter, S., Self-Normalizing Neural Networks, Adv.

and Ba, J., Adam: A Method for Stochastic Optimization, CoRR, vol. Kim, T., Cha, M., Kim, H., and Lee, J.K., Learning to Discover Cross-Domain Relations with Generative Adversarial Networks, Int. and Socher, R., Improving Generalization Performance by Switching from Adam to SGD, arXiv preprint arXiv:1712.07628, 2017. Jin, C., Ge, R., Netrapalli, P., and Kakade, S.M., How to Escape Saddle Points Efficiently, arXiv preprint arXiv: 1703.00887, 2017. He, K., Zhang, X., Ren, S., and Sun, J., Delving Deep into Rectifiers: Surpassing Human-Level Performance on Imagenet Classification, 2015 IEEE Int.

Hara, K., Saito, D., and Shouno, H., Analysis of Function of Rectified Linear Unit Used in Deep Learning, Int. Gulrajani, I., Ahmed, F., Arjovsky, M., and Dumoulin, V., Improved Training of Wasserstein GANs, arXiv preprint arXiv:1704.00028, 2017. Goodfellow, I., Pouget-Abadie, J., Mirza, M., and Xu, B., Generative Adversarial Nets, Adv. Ge, R., Huang, F., Jin, C., and Yuan, Y., Escaping from Saddle Points-Online Stochastic Gradient for Tensor Decomposition, arXiv preprint arXiv:1503.02101, 2015. and Scheinberg, K., Optimization Methods for Supervised Machine Learning: From Linear Models to Deep Learning, arXiv preprint arXiv: 1706.10207, 2017.

1133, 2001.īerthelot, D., BEGAN: Boundary Equilibrium Generative Adversarial Networks, arXiv preprint arX-iv:1703.10717, 2017.Ĭurtis, F.E. and Laughlin, S.B., An Energy Budget for Signaling in the Grey Matter of the Brain, J. Arjovsky, M., Chintala, S., and Bottou, L., Wasserstein GAN, arXiv preprint arXiv:1701.07875, 2017.Īttwell, D.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed